Politifact offered this as an image of the kind of mass violence that it’s “mostly false” to say happen more frequently in the United States. (Curtis Compton/Atlanta Journal-Constitution/TNS)

In theory, factchecking is one of the most important functions of journalism. In practice, systematic efforts by corporate media to “factcheck” political statements are often worse than useless.

Take PolitiFact, a project of the Tampa Bay Tribune, and its recent offering “Is Barack Obama Correct That Mass Killings Don’t Happen in Other Countries?” (6/22/15).

The first thing to note is that isn’t what Obama said. The statement that PoltiFact‘s Keely Herring and Louis Jacobson “factchecked” was this:

Now is the time for mourning and for healing. But let’s be clear: At some point, we as a country will have to reckon with the fact that this type of mass violence does not happen in other advanced countries. It doesn’t happen in other places with this kind of frequency. And it is in our power to do something about it.

It’s generally understood that when people make a series of statements, they’re referring to the same reality in each statement—so you interpret their statements so that they make sense as a whole. But that’s not how PolitiFact interprets statements; instead, it analyzes each sentence in isolation. For example, it says it rated Obama’s statement as “Mostly False,” because—taken on its own—Obama’s statement that “this type of mass violence does not happen in other advanced countries” is not true. Never mind that “the White House argues that Obama’s second sentence qualifies the first,” as PolitiFact acknowledges; that’s how ordinary people interpret language, not media factcheckers.

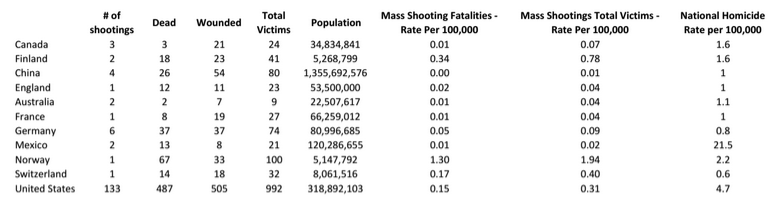

PolitiFact also has doubts about the other part of Obama’s remarks—that mass violence “doesn’t happen in other places with this kind of frequency”—because if you take the number of people killed in mass violence and divide by total population, you find that there’s a handful of countries—notably Norway, where 67 people were killed in a single mass-shooting incident—where people have died at a higher rate in shooting sprees between 2000 and 2014:

“The fact that three other countries exceeded the United States using this method of comparison does weaken Obama’s claim that ‘it doesn’t happen in other places with this kind of frequency,'” PolitiFact says.

Now, it seems to me that PolitiFact is confusing the ideas of frequency and rate: One would normally say that murders happen more frequently in New York City than in Indianapolis, because New York has more murders in a year, and that Indianapolis has a higher homicide rate than New York City, because Indianapolis has more murders per year per person. That, I submit, is the ordinary way that English-speaking people use those terms.

But whether or not it’s possible to use “frequency” as synonymous with “rate,” it’s obvious that that’s not how Obama was using the word. It’s clear that he was using a common definition of the word (“the number of times that something happens during a particular period”—Merriam-Webster) to indicate that mass violence occurs much more often in the United States than in other advanced countries. Which it does, as the chart above demonstrates—22 times more often than any other country listed.

Again, ordinary people understand that words can have various meanings and figure out which one the speaker intends, whereas media factcheckers first decide what a word means and then figure out if what the speaker intends to convey fits with the meaning the factcheckers have decided to use.

This is the approach by which the president can state that mass violence “doesn’t happen in other places with this kind of frequency,” a factchecking organization can turn up data showing that there were 133 mass-shooting incidents in the United States over a 15-year period vs. six in the country with the second-highest number of shooting sprees—and conclude from this that the president’s statement is “Mostly False.”

Obviously, this is not at all helpful to the US public, who have a vital interest in knowing whether mass violence occurs more frequently in their country than elsewhere. It is, however, a big help in maintaining PolitiFact‘s brand as a nonpartisan factchecking service. Fellow media factchecker Brooks Jackson (of FactCheck.org) explained how the gig works (Extra!, 12/12):

Even if we could come up with a scholarly and factual way to say that one candidate is being more deceptive than another, I think we probably wouldn’t just because it would look like we were endorsing the other candidate.

Or as Peter Hart concluded from Jackson’s explanation of how media factcheckers work:

They are people who carefully arrange each chip in an effort to create the illusion that they let the chips fall where they may.

Jim Naureckas is the editor of FAIR.org.

Messages to PolitiFact can be sent here (or via Twitter @PolitiFact). Please remember that respectful communication is the most effective.

I notice in the correction the authors imply anyone correcting them are pieces of shit:

“We know some of you will disagree, and we’ll be sure to air out some of your objections in our next reader mailbag.”

I lost a huge amount of respect for Politifact when in a private email to me they admitted an error in scoring a statement by Palin as correct, and then didn’t fix the post and make clear that Palin was of course wrong.

It was regarding military spending being the biggest US budget item, and Politifact conveniently ignored the fact that the department of energy develops weapons and wars are funded separately from the Pentagon’s budget.

Palin had claimed that Social Security (with its own dedicated funding) plus Medicare (again with its own funding) and Medicaid spent more than the the military.

Politifact had twisted the facts to make Palin’s claim “correct”.

They are to be ignored, even if they happen to agree with one.

And I think we know what those chips are composed up, don’t we just?

Not taking the sample size adjusted into account is *always* dishonest. If your interpretation of what Obama meant is correct he deserves a pants on fire rating.

“[C]omposed of”, I meant to say.

Oy …